Agentic AI Data Leaks: 2026 Risks & Actionable Security Fixes

AI Data Leaks in 2026: How Agentic Workflows Expose Secrets (And How to Fix It)

By 2026, Gartner predicts that 60% of enterprises will experience AI-driven data leaks—not from external hackers, but from the very tools designed to streamline their workflows. The culprit? Agentic AI workflows: autonomous systems that make decisions, execute tasks, and interact with APIs and data without constant human oversight. These workflows are becoming the backbone of modern business operations, from DevOps pipelines to customer service bots. Yet, they’re also creating new, often invisible, attack surfaces that traditional security tools weren’t designed to handle.

Consider the UNC6426 breach, where attackers compromised AWS environments by publishing a malicious nx package to npm. The exploit didn’t rely on phishing or brute force—it leveraged the autonomous nature of AI-driven DevOps tools that automatically fetched and executed the package. Or take Samsung’s 2023 ChatGPT leak, where employees pasted proprietary code into a public LLM, exposing sensitive intellectual property. These incidents aren’t outliers; they’re the new normal as agentic workflows proliferate.

This article breaks down how agentic workflows leak data, real-world examples of exploits, and—most importantly—actionable steps to audit and secure your AI systems before it’s too late.

How Agentic Workflows Become Data Leak Vectors (And Why It’s Worse in 2026)

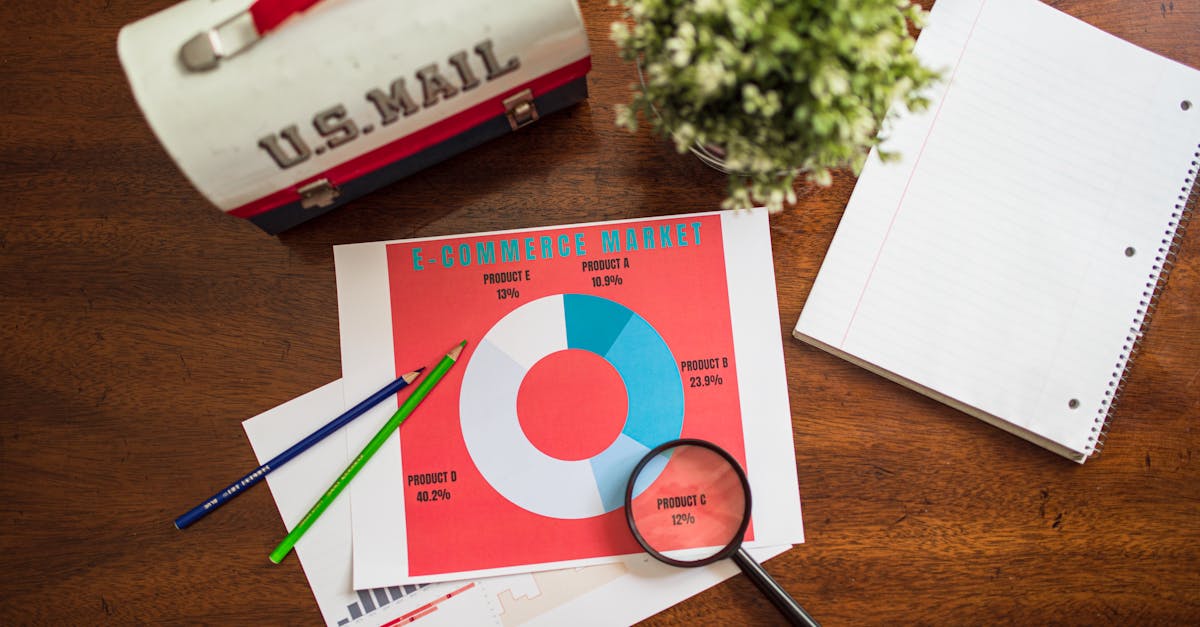

Photo by RDNE Stock project on Pexels

Photo by RDNE Stock project on Pexels

Autonomy = Expanded Attack Surface

Agentic workflows are designed to act independently, which means they can access, process, and share data without human intervention. This autonomy is a double-edged sword:

- Slack bots can pull customer data from CRM systems and share it in channels.

- GitHub Copilot can suggest code snippets containing hardcoded API keys or PII.

- Custom LLMs can autonomously query databases, retrieve sensitive records, and generate reports—all without logging or oversight.

The problem? These agents don’t just handle data—they transform it. A misconfigured AI agent in a CI/CD pipeline might expose API keys in logs, or a customer service bot could inadvertently leak PII in a chat transcript. Unlike traditional software, where data flows are predictable, agentic workflows adapt and evolve, making leaks harder to detect.

Supply-Chain Risks in AI Tools

Agentic workflows don’t operate in isolation. They rely on third-party dependencies, from npm packages to pre-trained models, which introduce supply-chain risks:

- Malicious packages: In the UNC6426 breach, attackers published a compromised

nxpackage to npm, which was automatically fetched by CI/CD pipelines. The package stole AWS credentials, leading to lateral movement and data exfiltration. - Training data leaks: Samsung’s ChatGPT incident showed how public LLMs can memorize and regurgitate sensitive data from their training sets. If an employee pastes proprietary code into a public LLM, that data could resurface in another user’s query.

- Model poisoning: Attackers can manipulate training data to embed backdoors or biases. For example, a healthcare AI agent trained on poisoned data might leak patient records when queried in a specific way.

The 2026 Factor: AI at Scale

Gartner estimates that by 2026, 30% of enterprises will use agentic AI, up from less than 5% in 2023. This rapid adoption is driven by three key trends:

- Shadow AI: Employees are using unvetted AI tools to boost productivity. Salesforce’s 2025 report found that 68% of workers use AI tools not approved by IT, from personal LLMs to custom agents.

- AI-driven DevOps: Tools like GitHub Copilot and autonomous CI/CD agents are blurring the line between development and production, increasing the risk of accidental exposure.

- Regulatory pressure: The EU AI Act and NIST’s AI Risk Management Framework are pushing organizations to secure AI systems, but many lack the tools or expertise to comply.

Real-World AI Data Leaks: Case Studies of Agentic Workflow Exploits

Photo by Google DeepMind on Pexels

Photo by Google DeepMind on Pexels

Case 1: UNC6426’s AWS Compromise via npm

What happened:

In 2024, the threat group UNC6426 (linked to North Korea) published a malicious nx package to npm, a popular JavaScript package registry. The package contained obfuscated code that stole AWS credentials from CI/CD pipelines. When DevOps teams ran npm install, the package executed automatically, exfiltrating credentials to an attacker-controlled server.

Agentic workflow flaw: The breach exploited autonomous CI/CD agents that fetch and execute dependencies without human review. These agents are designed to streamline development, but their lack of input validation made them vulnerable to supply-chain attacks.

Impact:

- Lateral movement into AWS environments.

- Data exfiltration, including customer records and proprietary code.

- Compliance violations under GDPR and HIPAA (if healthcare data was exposed).

Key takeaway: Agentic workflows amplify supply-chain risks. A single compromised dependency can lead to a full-scale breach.

Case 2: AI-Powered Phishing & Credential Harvesting

What happened: In 2025, Proofpoint reported a 40% increase in AI-driven phishing attacks. Attackers used custom LLMs to generate highly convincing phishing emails, complete with stolen branding and personalized details. In one case, a financial services firm’s AI email drafting tool was tricked into generating phishing templates using stolen customer data.

Agentic workflow flaw: The AI agent lacked output validation, allowing it to generate malicious content. Additionally, the agent autonomously pulled customer data from CRM systems to personalize emails, creating a feedback loop of exposure.

Impact:

- Credential harvesting at scale.

- Regulatory fines under PCI DSS (for payment data exposure).

- Reputational damage from targeted phishing campaigns.

Key takeaway: AI agents can inadvertently become attack vectors if their outputs aren’t sanitized.

Case 3: Training Data Poisoning & Model Inversion

What happened: A healthcare provider deployed an AI agent to analyze patient records and flag high-risk cases. Attackers poisoned the training data by injecting fake records containing PII. When the model was queried, it leaked real patient data in its responses. This is known as a model inversion attack, where attackers extract training data from AI outputs.

Agentic workflow flaw: The AI agent lacked input validation during training, allowing poisoned data to slip through. Additionally, the agent didn’t log or audit its queries, making it impossible to trace the leak.

Impact:

- GDPR fines of up to 4% of global revenue.

- Loss of patient trust and potential lawsuits.

- Compliance violations under HIPAA.

Key takeaway: AI training pipelines are high-risk vectors for data leaks. Without proper validation, even "secure" models can become liabilities.

Why Traditional Security Fails Against AI Data Leaks

AI Agents Bypass Traditional Controls

Traditional security tools like Data Loss Prevention (DLP) and Cloud Access Security Brokers (CASBs) were designed for static, human-driven workflows. They struggle with agentic AI for three reasons:

- DLP misses AI-generated data: AI agents can rephrase, obfuscate, or encrypt data in ways DLP tools can’t detect. For example, an AI agent might replace "Project Phoenix" with "Project P" in logs, evading keyword-based filters.

- CASBs can’t inspect AI workflows: Many AI agents operate outside sanctioned cloud apps, using APIs or local processing that CASBs can’t monitor.

- Behavioral analysis fails: AI agents adapt their behavior based on context, making it hard for anomaly detection systems to flag suspicious activity.

Example: A financial AI agent flags fraudulent transactions but leaks PII in its logs. Traditional DLP tools miss the leak because the PII is embedded in JSON responses rather than plaintext.

The "Black Box" Problem

AI agents operate as black boxes, making it difficult to audit their decisions. For example:

- Why did the agent access this database? Without explainable AI (XAI) tools, it’s hard to trace the agent’s reasoning.

- What data did the agent process? Many AI agents don’t log their inputs/outputs, making leaks impossible to investigate.

Example: A legal AI agent redacts documents but leaks metadata (e.g., author names, timestamps) in its outputs. Without proper logging, the leak goes undetected.

Shadow AI & Unvetted Tools

68% of employees use unapproved AI tools, per Salesforce’s 2025 report. These tools often lack:

- Input validation: Employees paste sensitive data into public LLMs, risking exposure.

- Encryption: Many personal AI tools don’t encrypt data in transit or at rest.

- Access controls: Unvetted tools may store data in unsecured cloud buckets.

Example: A marketing team uses an unvetted AI tool to analyze customer data. The tool stores the data in an unencrypted S3 bucket, leading to a leak.

How to Audit Agentic Workflows for Data Leaks

Photo by Google DeepMind on Pexels

Photo by Google DeepMind on Pexels

Step 1: Map Your AI Attack Surface

Actionable steps:

- Inventory AI agents: List all autonomous agents in your organization, including:

- Slack/Teams bots.

- GitHub Actions/CI/CD agents.

- Custom LLMs or fine-tuned models.

- Third-party AI tools (e.g., Copilot, Midjourney).

- Track data flows: Use tools like OpenTelemetry or Datadog to monitor:

- What data each agent accesses.

- Where the data is sent (e.g., APIs, cloud storage).

- How the data is transformed (e.g., redaction, encryption).

- Classify data: Label data by sensitivity (e.g., PII, proprietary code, financial records) and restrict agent access accordingly.

Tools to use:

- OpenTelemetry: For tracing data flows in AI agents.

- Datadog: For monitoring agent behavior in real time.

- AWS IAM Access Analyzer: For identifying over-permissioned agents.

Step 2: Implement AI-Specific Controls

Actionable steps:

- Input/output validation:

- Sanitize inputs: Block PII, API keys, or proprietary code from being sent to AI agents.

- Sanitize outputs: Use tools like Microsoft Presidio to detect and redact sensitive data in AI outputs.

- Least-privilege access:

- Restrict AI agents to only the data they need.

- Use temporary credentials (e.g., AWS STS) for cloud access.

- Encryption:

- Encrypt data in transit (TLS 1.3) and at rest (AES-256).

- For extra security, use GhostShield VPN’s WireGuard-based encryption to protect data flowing between AI agents and APIs.

Example: A customer service bot should never access full customer records—only the fields it needs (e.g., name, order status). Use attribute-based access control (ABAC) to enforce this.

Step 3: Monitor and Audit AI Agents

Actionable steps:

- Enable logging:

- Log all inputs/outputs for AI agents (e.g., prompts, responses, data accessed).

- Store logs in a secure SIEM (e.g., Splunk, Elasticsearch) for analysis.

- Anomaly detection:

- Use AI-powered threat detection (e.g., Darktrace, Vectra) to flag unusual behavior (e.g., an agent accessing a database it’s never queried before).

- Regular audits:

- Conduct quarterly audits of AI agent behavior.

- Use OWASP’s LLM Security Checklist to assess risks.

Tools to use:

- Splunk: For log analysis and anomaly detection.

- Darktrace: For AI-powered threat detection.

- OWASP LLM Security Checklist: For auditing AI agents.

Step 4: Secure the AI Supply Chain

Actionable steps:

- Vet dependencies:

- Use npm audit, Dependabot, or Snyk to scan for malicious packages.

- Pin dependencies to specific versions to avoid supply-chain attacks.

- Isolate training data:

- Use differential privacy to anonymize training data.

- Never use production data for training—use synthetic data instead.

- Model hardening:

- Fine-tune models to reduce memorization of sensitive data.

- Use adversarial training to defend against model inversion attacks.

Example: Before deploying a custom LLM, scan its training data for PII using tools like Amazon Macie or Google DLP.

Key Takeaways

- Agentic workflows expand attack surfaces by autonomously accessing and sharing data, often without oversight.

- Supply-chain risks (e.g., malicious npm packages) and training data leaks (e.g., Samsung’s ChatGPT incident) are major threats in 2026.

- Traditional security tools (DLP, CASBs) fail to detect AI-driven leaks because agents obfuscate data and operate outside sanctioned apps.

- Shadow AI is rampant—68% of employees use unvetted AI tools, per Salesforce.

- Actionable fixes:

- Map your AI attack surface (inventory agents, track data flows).

- Implement AI-specific controls (input/output validation, least-privilege access).

- Monitor and audit agents (enable logging, use anomaly detection).

- Secure the AI supply chain (vet dependencies, harden models).

- For extra protection, use GhostShield VPN’s WireGuard encryption to secure data flowing between AI agents and APIs.

The rise of agentic workflows isn’t just a trend—it’s a fundamental shift in how data is processed and shared. Organizations that fail to adapt will face breaches, fines, and reputational damage. The good news? With the right tools and strategies, you can harness the power of AI without exposing your secrets. Start auditing your agentic workflows today—before it’s too late.

Related Topics

Keep Reading

Protect Your Privacy Today

GhostShield VPN uses AI-powered threat detection and military-grade WireGuard encryption to keep you safe.

Download Free